When Digital Spaces Stop Being Safe

The internet changed childhood forever. It opened doors for creativity, community, and connection. But it also opened doors for people who exploit children in ways families never anticipated. Parents juggle work, school, and life. They trust platforms that appear safe, believing that built-in controls and “kid friendly” labels shield their children from harm. But what happens when safety systems give a false sense of security?

Scott Burch

Founder & Executive Director

When Digital Spaces Stop Being Safe

The internet is part of childhood now. That reality is not changing.

For many families, a bedroom with a headset on looks safe. A familiar platform. A game millions of kids use. Built-in parental controls. Age gates. Moderation promises. It feels contained. Most of the time, it is.

But safety in digital spaces cannot rely on branding or assumption. It has to be built into the architecture itself.

Recent reporting has brought attention to a lawsuit filed by Los Angeles County against Roblox, alleging that the platform’s design and moderation systems failed to adequately protect children from grooming and exploitation. According to the county’s complaint, the platform presented itself as safe for young users while allegedly lacking meaningful safeguards such as effective age verification and functional parental controls. These are allegations in ongoing litigation, not findings of guilt. Still, they raise serious questions about how child safety is implemented in large digital environments.

In a separate publicly reported case, the family of a 13-year-old girl has filed a lawsuit alleging she was groomed through Roblox before being kidnapped. That case, too, is active litigation. But the broader issue extends beyond one company or one courtroom. The larger question is this: are digital safety systems keeping pace with digital reality?

The scale of online exploitation makes that question impossible to ignore.

According to the National Center for Missing & Exploited Children, the CyberTipline received 16,902,083 reports of suspected child sexual exploitation in 2019. By 2023, that number had more than doubled to 36,210,368 reports in a single year. These reports include online enticement, grooming, child sexual abuse material, and related exploitation. (Source: NCMEC CyberTipline Reports, 2019–2023)

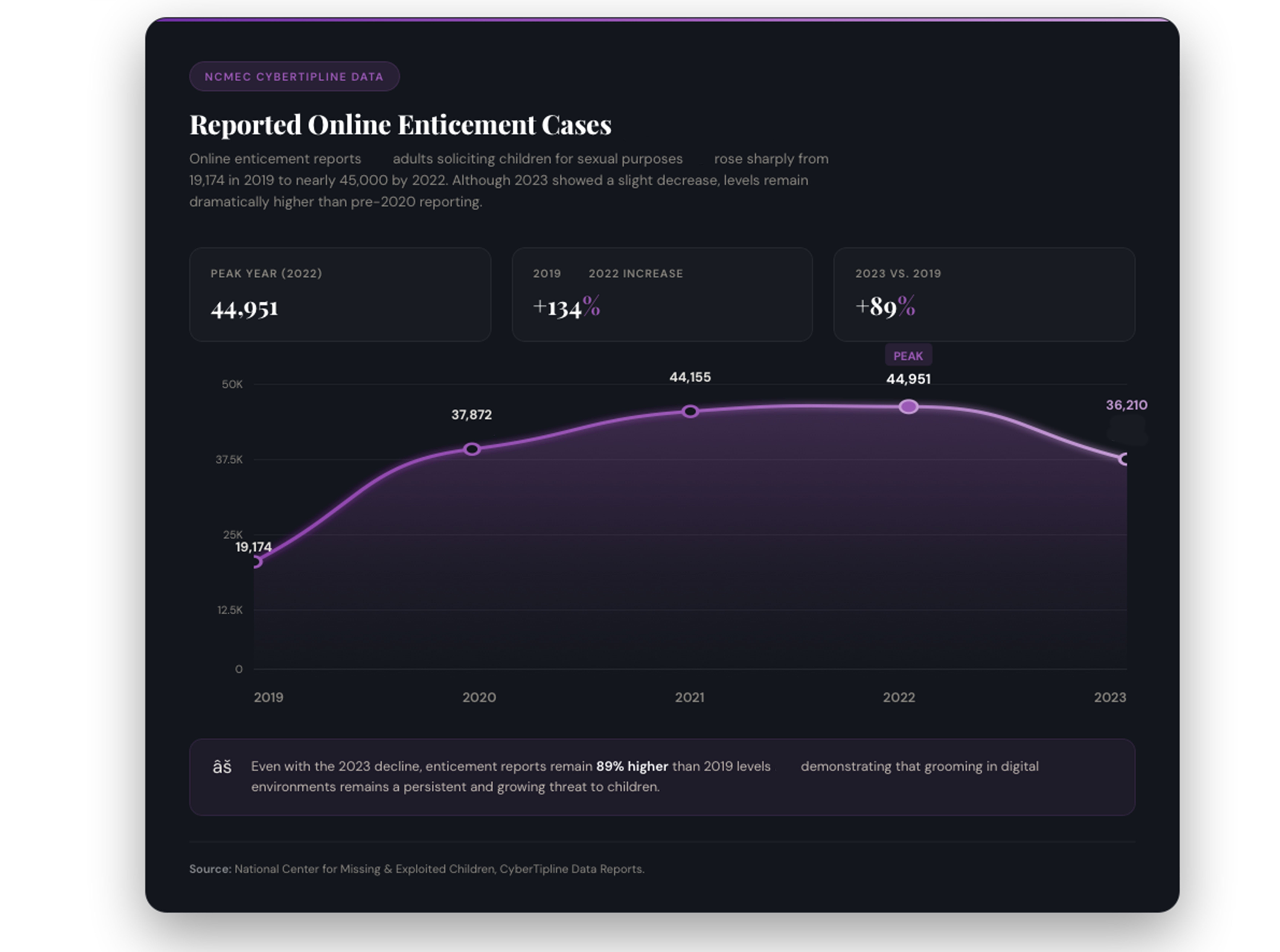

The rise is not only in overall reports. Online enticement, which includes adults soliciting children for sexual purposes, jumped from 19,174 reports in 2019 to 44,951 in 2022. Even with a slight decrease in 2023 to 36,210 reports, the volume remains significantly higher than pre-2020 levels. (Source: NCMEC CyberTipline Annual Data)

Behind those numbers are children navigating digital spaces that were not always designed with their vulnerability in mind.

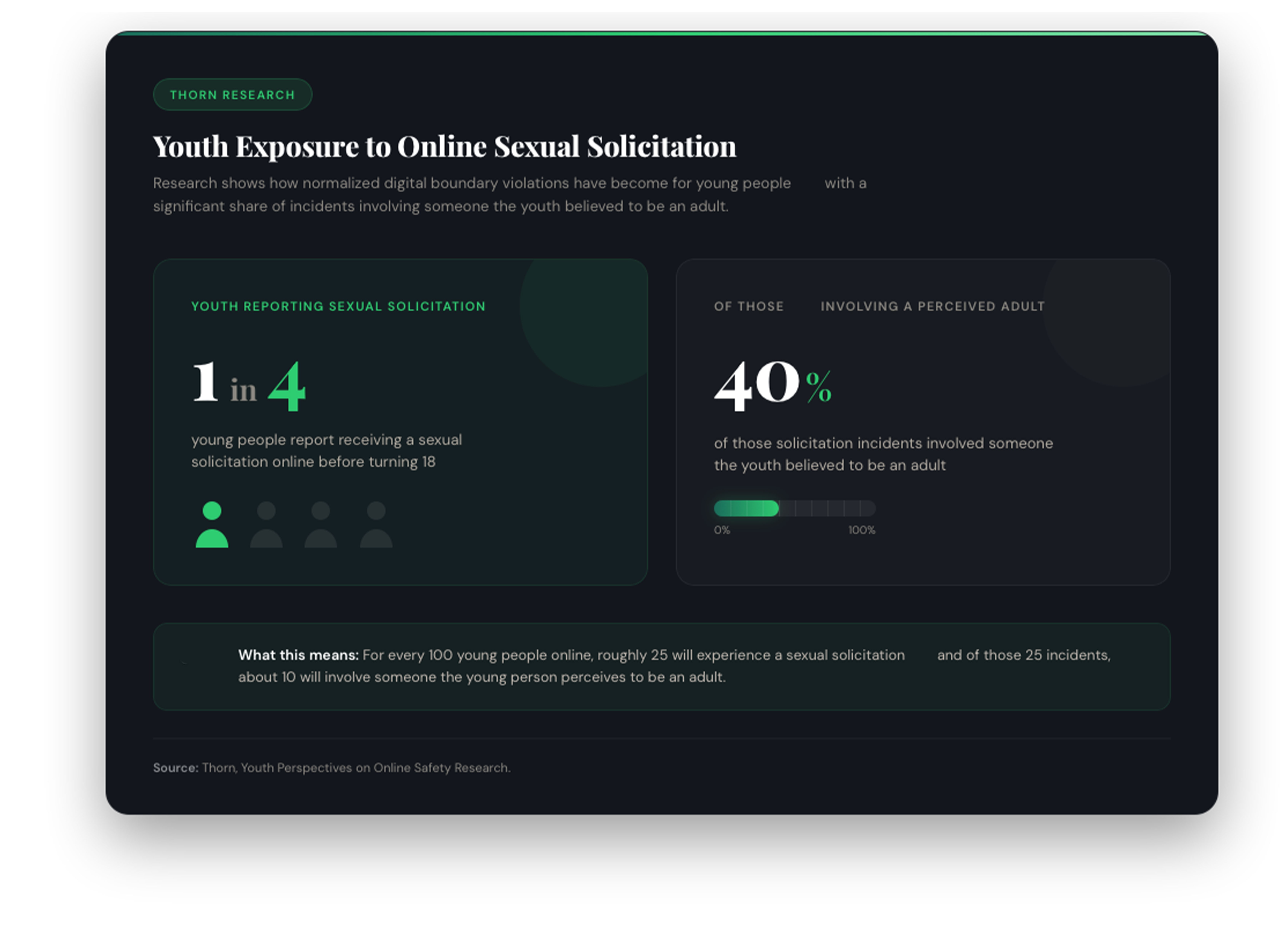

Research from Thorn indicates that approximately one in four young people report receiving some form of sexual solicitation online before turning 18. About 40 percent of those incidents involved someone the youth believed to be an adult. (Source: Thorn, Youth Perspectives on Online Safety Research)

Grooming rarely looks dramatic at first. It begins with shared interests. Casual chat. Compliments. It moves from public spaces into private messages. It introduces secrecy. It tests boundaries slowly.

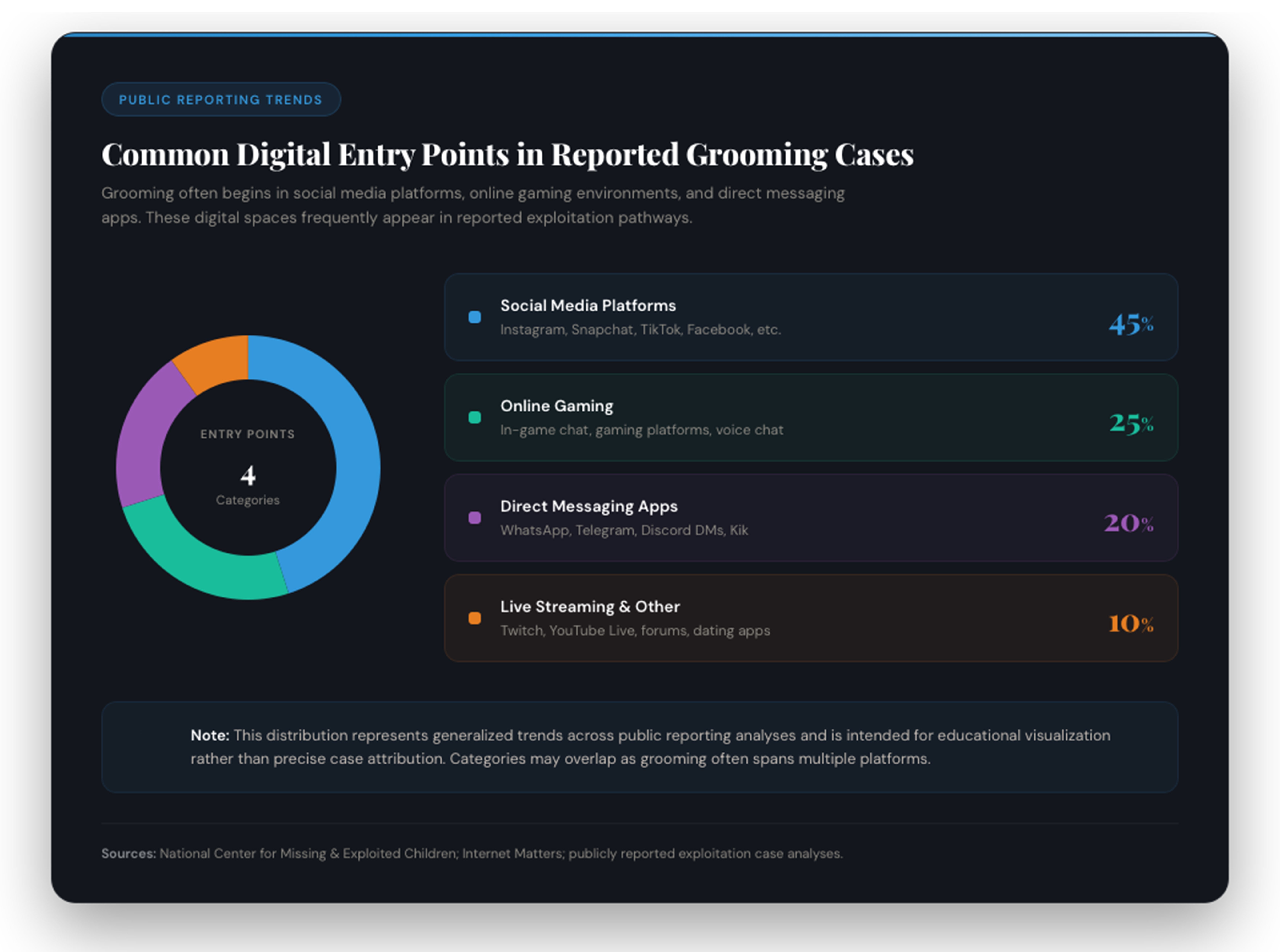

In many cases, contact begins in digital environments built for connection — social platforms, gaming spaces, messaging apps, and live streaming environments. Public reporting trends show social media and online gaming as common entry points for initial contact. (Sources: NCMEC, Internet Matters, public exploitation reporting analyses)

None of this means every gaming platform is inherently dangerous. Millions of children use digital spaces safely every day. But when safety tools are unclear, inconsistently enforced, or easily bypassed, parents are left relying on systems that may not function as they appear.

And parents are not failing. They are working. They are stretched thin. They are trusting that safety systems advertised as protective actually protect.

That trust must be earned.

Platforms must design with safety at the center, not as a feature added later. Age verification should mean something. Reporting tools must function in real time. Moderation cannot rely solely on automation without human accountability. When exploitation is reported, response should be swift and transparent.

Lawmakers must understand the mechanics of digital platforms, not just the headlines. Oversight should be informed and continuous. When lawsuits surface, they should not be treated as isolated controversies but as signals of systemic gaps.

Young people need more than device restrictions. They need education. They need to understand how grooming works, how manipulation builds gradually, and how to recognize when secrecy is a red flag.

This is where solution meets responsibility.

At Room To Care, digital exploitation is not an afterthought. It is central to how vulnerability operates in the modern world. Through Their Eyes will address digital pathways that can lead to coercion, exploitation, and trafficking. Not just physical environments. Digital ones.

We are also developing a standalone program designed specifically for parents, teachers, and youth. It will focus on always-connected devices, online gaming spaces, social platforms, and the behavioral warning signs that matter most.

The goal is not to create fear. The goal is to create awareness paired with direction. Technology is not inherently harmful. But systems without strong accountability can become vulnerable to misuse. When that happens, children pay the price.

If you believe children deserve safer digital environments, stronger safeguards, and education that keeps pace with reality, we invite you to support the development of Through Their Eyes and our digital exploitation program.

Individual donations and corporate sponsorships help us build this work carefully and responsibly.

Donate at roomtocare.com/donate

Support via GiveButter at givebutter.com/RoomToCare2026

Text “RTC2026” to 53-555 to contribute

Because prevention in a digital world requires more than headlines. It requires design, accountability, and education working together.

Sources

Data and reporting cited in this article are drawn from publicly available research, annual reporting, and official press releases.

National Center for Missing & Exploited Children (NCMEC). CyberTipline Annual Reports and Data Reports, 2019–2023.

https://www.missingkids.org/cybertiplinedata

Thorn. Youth Perspectives on Online Safety Research.

https://www.thorn.org/press-releases/new-research-1-in-4-young-people-report-receiving-a-commercial-sexual-solicitation-online-before-turning-18/

Internet Matters. Online Grooming: What Parents Need to Know.

https://www.internetmatters.org/issues/online-grooming/learn-about-it/

Los Angeles County. LA County Sues Roblox for Unfair and Deceptive Business Practices That Endanger and Exploit Children (Press Release, February 19, 2026).

https://lacounty.gov/2026/02/19/la-county-sues-roblox-for-unfair-and-deceptive-business-practices-that-endanger-and-exploit-children/

The Guardian. Los Angeles Sues Roblox (February 20, 2026).

https://www.theguardian.com/games/2026/feb/20/los-angeles-sues-roblox-la-county

Milberg Coleman Bryson Phillips Grossman. 13-Year-Old Groomed and Kidnapped Through Roblox, Family Files Lawsuit.

https://milberg.com/news/13-year-old-groomed-kidnapped-through-roblox-family-sues-gaming-giant/

Read more

Through Their Eyes Production Update: Filming Starts Next Week

Our first survivor interviews for Through Their Eyes begin April 28th. Here's what that means, what you'll see, and what we still need.

Scott Burch

Founder & Executive Director

Open Doors at The Potter's House

Room To Care joined VisioTech at The Potter's House in Dallas for an honest conversation about what it actually takes to keep children safe in an always-online world.

Scott Burch

Founder & Executive Director

Through Their Eyes Production Update: March 20th 2026

If you have been following Room To Care over the past several weeks, you may have noticed the pace of our blog posts slow down. We have not lost momentum. We have shifted it. Through Their Eyes has officially moved from pre-production into production, and we wanted to tell you what that means.

Scott Burch

Founder & Executive Director