When the Predator Doesn't Even Need to Be Real

In the first six months of 2025, reports of AI-generated child sexual exploitation submitted to the National Center for Missing & Exploited Children increased by 6,341 percent. From 6,835 reports to 440,419. The technology that was supposed to make our lives easier is being weaponized against children at a speed that outpaces every protection we have built.

1 month ago

Scott Burch

Founder & Executive Director

When the Predator Doesn't Even Need to Be Real

In the first six months of 2025, reports of AI-generated child sexual exploitation submitted to the National Center for Missing & Exploited Children increased by 6,341 percent. That is not a typo. From 6,835 reports in the first half of 2024 to 440,419 in the same period of 2025. The technology that was supposed to make our lives easier is being weaponized against children at a speed that outpaces every protection we have built.

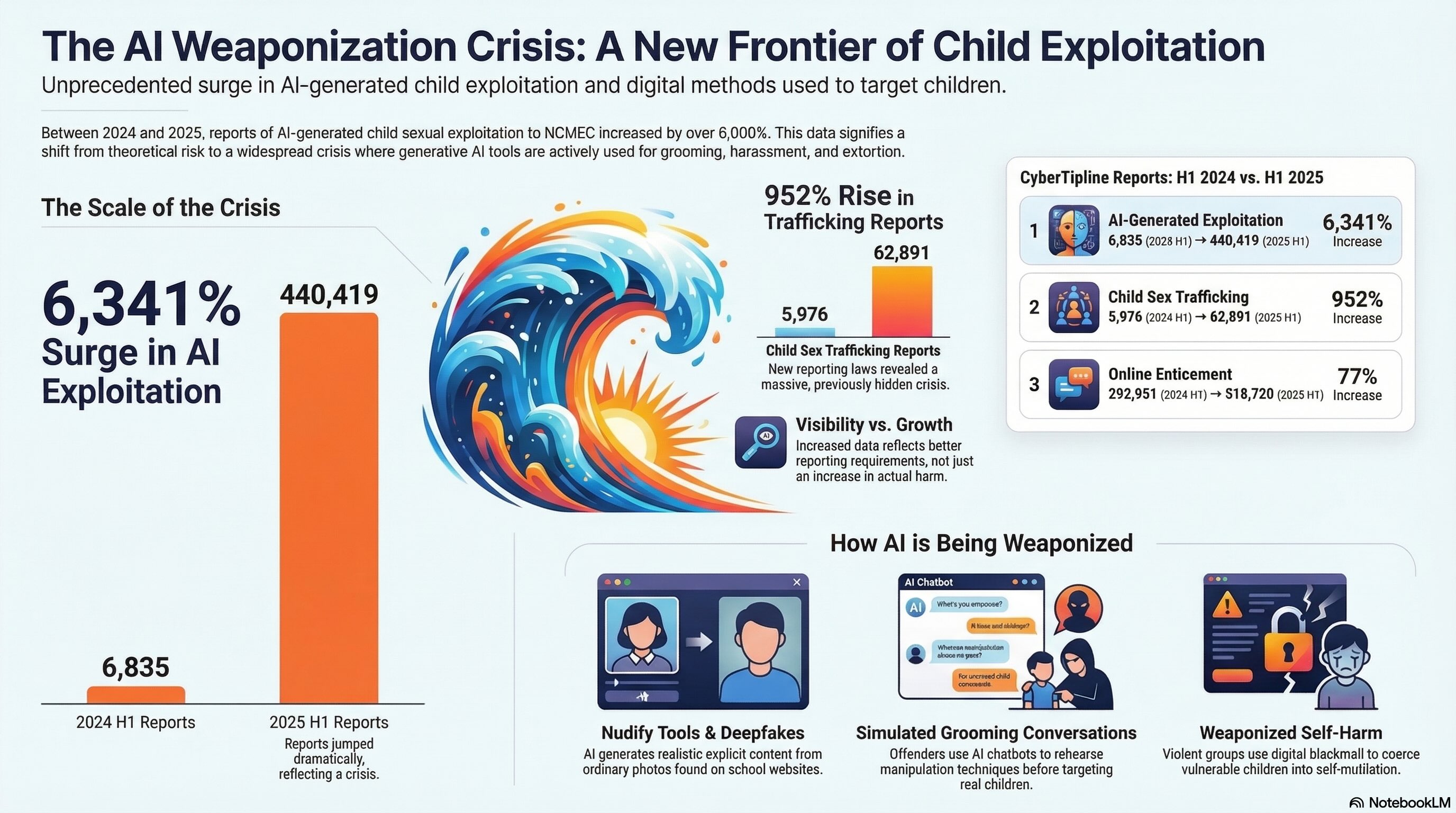

The Scale of What Is Happening

The National Center for Missing & Exploited Children operates the CyberTipline, the federally mandated clearinghouse for reporting online child sexual exploitation in the United States. The numbers the CyberTipline tracks are not estimates or projections. They are reports submitted by platforms, law enforcement, and the public about specific incidents involving real children.

In the first six months of 2025, the CyberTipline data showed increases across every major category of online child exploitation. AI-Generated Exploitation went from 6,835 reports to 440,419 reports, a 6,341% increase. Child Sex Trafficking went from 5,976 reports to 62,891 reports, a 952% increase. Online Enticement went from 292,951 reports to 518,720 reports, a 77% increase. Financial Sextortion went from 13,842 reports to 23,593 reports, a 70% increase.

For the full year of 2025, NCMEC received more than 113,500 child sex trafficking reports, a 323% increase over 2024. Of those, 93% were submitted by online platforms, a direct result of the REPORT Act requiring platforms to report suspected child sex trafficking for the first time.

When AI Becomes the Weapon

The most alarming shift in the data is the explosion of AI-generated exploitation. This is not theoretical. It is happening in schools, in bedrooms, and on platforms millions of children use every day.

NCMEC's Congressional testimony in December 2025 described several categories of how offenders are using generative AI.

Nudify tools. Applications, many of them freely available, that take a clothed photograph of a person and generate a realistic nude image. Offenders, including other students, are using these tools on classmates' photos pulled from social media and school websites. The resulting images are shared, used for harassment, or leveraged for extortion. This is happening in schools across the country.

Simulated grooming conversations. AI chatbots are being used to simulate sexually explicit conversations with what the user frames as a child persona. These tools allow offenders to rehearse manipulation techniques before deploying them against real children.

Deepfake exploitation. Offenders are creating realistic video and image content depicting children in sexually explicit situations using AI. The Internet Watch Foundation detected over 3,400 AI-generated child sexual abuse videos by the end of 2025, more than half classified at the most severe tier of abuse.

Weaponized self-harm. Violent online groups, including the network known as 764, are targeting vulnerable children through platforms like Discord and Roblox. They befriend victims, elicit private information and images, then use that material to blackmail children into mutilating themselves, harming others, or streaming acts of violence. NCMEC described this as the most egregious exploitation they have ever seen in their history.

Since 2021, NCMEC is aware of at least 36 teenage boys who died by suicide after being victims of financial sextortion. One mother testified before the Senate Judiciary Committee that her 17-year-old son, James, was sextorted over the course of 19 and a half hours and 400 messages before he took his own life.

The Platforms Are on Trial Right Now

As this post is being written, two landmark trials are underway that could reshape how technology companies are held accountable for harm to children.

In Los Angeles, a 20-year-old woman identified as Kaley is the plaintiff in the first-ever jury trial alleging that social media platforms were deliberately designed to be addictive to children. She says she began using YouTube at age 6 and Instagram at age 9, and that the platforms' design features, including infinite scrolling, beauty filters, push notifications, and algorithmic recommendations, fueled depression, body dysmorphia, and suicidal thoughts. TikTok and Snapchat settled before the trial began. Meta and Google remain as defendants.

Mark Zuckerberg testified before the jury on February 18. He was questioned about internal documents showing Meta had estimated more than 4 million children under 13 were using Instagram. He was asked about engagement goals showing the company aimed to increase average daily time spent on Instagram from 40 minutes to 46 minutes. He was asked about beauty filters that Meta's own researchers warned could harm teens' self-perception.

Plaintiffs' attorney Mark Lanier described the case as being about two of the richest corporations in history engineering addiction in children's brains. Legal experts have drawn comparisons to the tobacco litigation of the 1990s.

In a separate trial in New Mexico, the state attorney general alleges that Meta failed to protect children from sexual exploitation on its platforms. The AG's team built their case by posing as children online and documenting the sexual solicitations that followed.

These trials are the first of more than 1,500 similar lawsuits. The outcomes will likely determine the trajectory for hundreds of school district and family claims nationwide.

Why Visibility Matters

There is something important to understand about the NCMEC data: much of the increase in child sex trafficking reports is not because trafficking suddenly got worse. It is because the REPORT Act, signed into law in May 2024, required online platforms to report suspected child sex trafficking for the first time. Before this law, platforms were not legally obligated to flag trafficking activity. Many did not.

NCMEC's Melissa Snow put it directly: the increase does not mean the harm suddenly began. It means it is finally being seen.

The same is true of AI-generated exploitation. NCMEC began tracking AI-related reports in 2023. The tools existed before the tracking did. The abuse existed before the reports did. What the data shows is not a sudden crisis but a crisis that was hidden, one that is now becoming visible because reporting requirements have improved and awareness has grown.

This distinction matters because it speaks to a core principle of Through Their Eyes: you cannot address what you refuse to see. The program's entire premise is that education creates the conditions for recognition, and recognition creates the conditions for response.

We wrote about this dynamic in our previous post, When Digital Spaces Stop Being Safe, which examined the Roblox lawsuit and the broader question of platform accountability. The thread that connects that post to this one is the same: digital environments designed for connection are being exploited by people who prey on vulnerability. The tools change. The platforms change. The core pattern does not.

What Through Their Eyes Is Built to Address

Through Their Eyes was designed before the REPORT Act existed. Before the NCMEC data showed a 6,341% increase in AI exploitation reports. Before Mark Zuckerberg testified before a jury about children on Instagram. But the program was built with this future in mind.

Module 3 of Through Their Eyes covers technology and digital exploitation: how traffickers use social media, gaming platforms, deepfake AI, and online environments to recruit, control, and exploit victims. It addresses the digital pipeline that moves a child from a public platform to a private message to a situation they cannot escape.

The program does not stop at awareness. It is designed to teach people across industries, including hospitality, healthcare, education, law enforcement, social services, and transportation, what to look for, how to respond, and where to report. It is modular, meaning different sectors receive content tailored to their reality. A teacher's warning signs are different from a hotel worker's. Both need to know what they are seeing.

We are also actively seeking introductory conversations with survivors of digital exploitation, including individuals who were exploited through platforms like OnlyFans, Patreon, Fanly, camming sites, or coerced content creation. These stories are not represented in most training programs. Through Their Eyes is being built to change that.

The work we are doing is not reactive to the news cycle. It is designed to outlast it. But moments like this, when the data is this stark, when the trials are this visible, when the federal government is simultaneously acknowledging the crisis and defunding the response, are moments when education and prevention become more urgent than they have ever been.

What You Can Do

Talk to the young people in your life. Not about fear. About how grooming works. About how secrecy is a red flag. About how to recognize when someone online is testing boundaries. The conversation does not need to be perfect. It needs to happen.

Donate directly. Every dollar supports Through Their Eyes and Room To Care's commitment to education, prevention, and survivor-informed advocacy.

roomtocare.com/donate | givebutter.com/RoomToCare2026 | Text "RTC2026" to 53-555

Connect us with survivors. We are seeking introductions to survivors of labor trafficking, familial trafficking, and digital exploitation. If you or someone you know has experience in these areas and is open to a conversation, reach out through roomtocare.com.

Report what you see. If you suspect online child exploitation, report it to the CyberTipline at CyberTipline.org or call 1-800-843-5678 (THE-LOST). If a child is in immediate danger, call 911.

Share this post. The data in this post is public. The more people who see it, the harder it becomes to ignore.

Stay informed.

Room To Care: roomtocare.com | Through Their Eyes: throughtheireyes.co | National Center for Missing & Exploited Children: missingkids.org | Rebecca Bender Initiative: rebeccabender.org | New Friends New Life: newfriendsnewlife.org

Sources

National Center for Missing & Exploited Children. CyberTipline Annual Data and Mid-Year 2025 Reports.

NCMEC. Spike in Online Crimes Against Children a Wake-Up Call (2025).

NCMEC. Seeing What Others Can't: NCMEC's Role in Stopping Child Sex Trafficking (2026).

NCMEC. NCMEC on the Hill: Online Child Exploitation Escalating, More Violent (December 2025).

U.S. Senate Judiciary Committee. Protecting Our Children Online Against the Evolving Offender (December 9, 2025).

ABC News. Top Senator Says US Has Miserably Failed Our Children (December 9, 2025).

CNN. A Bellwether Social Media Addiction Trial Is Underway (February 22, 2026).

PBS NewsHour. Landmark Trial Accusing Tech Giants of Harming Children (February 2026).

Democracy Now. Predatory Tech: Silicon Valley on Trial (February 19, 2026).

Internet Watch Foundation / IBTimes UK. Reports on AI-Generated CSAM (March 2026).

Room To Care. When Digital Spaces Stop Being Safe (February 20, 2026).

Read more

Through Their Eyes Production Update: Filming Starts Next Week

Our first survivor interviews for Through Their Eyes begin April 28th. Here's what that means, what you'll see, and what we still need.

Scott Burch

Founder & Executive Director

Open Doors at The Potter's House

Room To Care joined VisioTech at The Potter's House in Dallas for an honest conversation about what it actually takes to keep children safe in an always-online world.

Scott Burch

Founder & Executive Director

Through Their Eyes Production Update: March 20th 2026

If you have been following Room To Care over the past several weeks, you may have noticed the pace of our blog posts slow down. We have not lost momentum. We have shifted it. Through Their Eyes has officially moved from pre-production into production, and we wanted to tell you what that means.

Scott Burch

Founder & Executive Director